Guide

Introduction

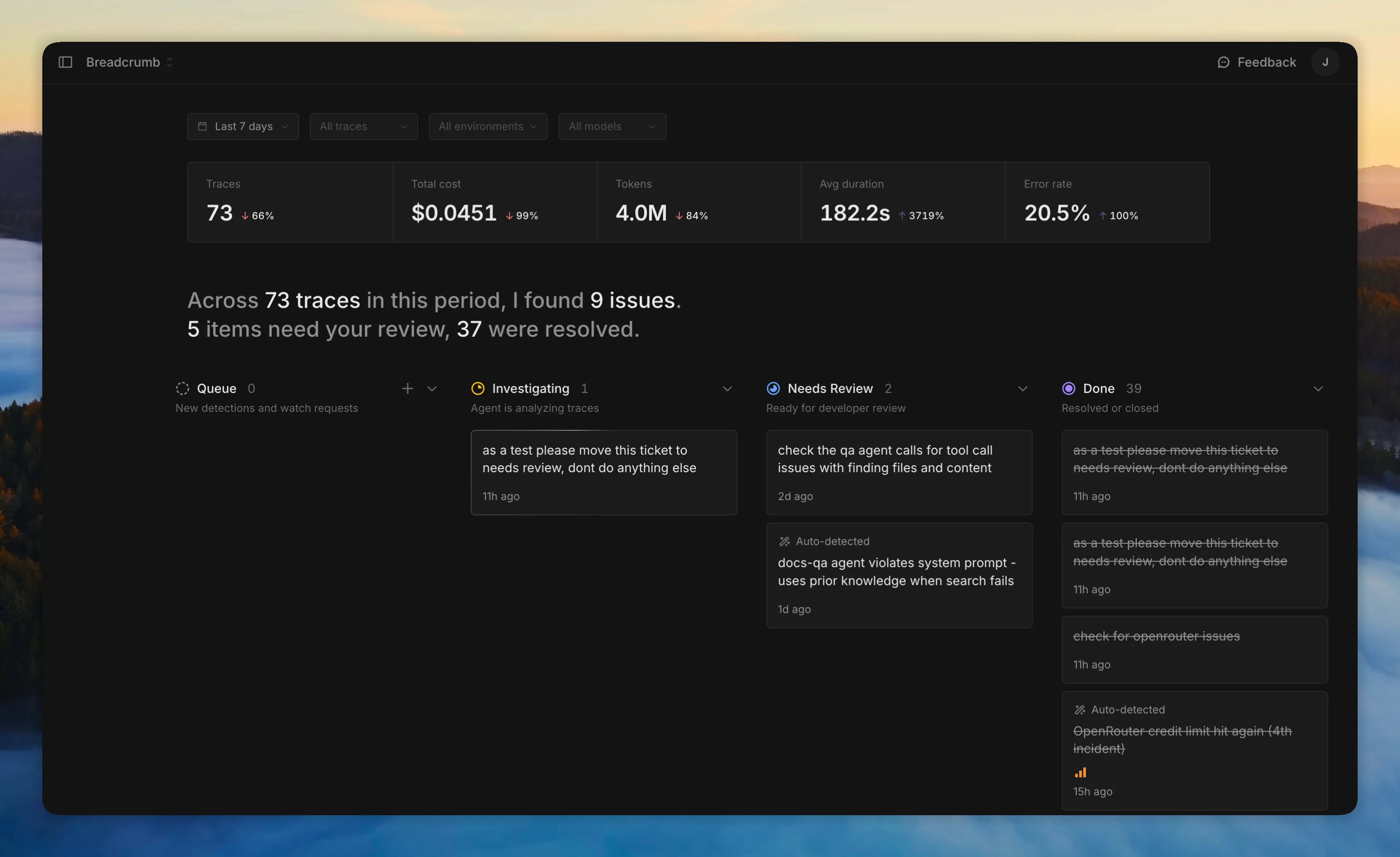

Your agents can hallucinate, lose context, and drift from their goal - all while returning a 200. Breadcrumb traces every LLM call and monitors your traces with an AI agent that catches what dashboards can't.

Open source. Self-hosted. Get started in minutes.

Setup

Deploy Breadcrumb

Railway (one click) or Docker Compose (your server). Hosted version coming soon.

Add the SDK

Works with Vercel AI SDK or the TypeScript SDK for manual instrumentation.

Enable monitoring

Set an AI provider. The monitor scans traces and creates tickets when it finds problems.

Questions? Talk to us on X.